As of 2026, text-to-image and text-to-video generation have evolved from experimental toys into the primary production engines for global ad agencies, game studios, and corporate brands. From the "plug-and-play" convenience offered by closed-source giants to the limitless flexibility of the open-source world, we explore in detail more than 40 models shaping the visual AI ecosystem of 2026.

PART 1: IMAGE GENERATION MODELS (Text-to-Image)

A. Leaders in Photo-Realism and Artistic Aesthetics

1. Midjourney v6 / v7 When it comes to artistic aesthetics, compositional depth, and cinematic lighting, it remains the undisputed gold standard of the market. Especially with the v6 and v7 architectures, the model's language understanding capacity has reached incredible levels. The hyper-realistic details it offers—from pores on human skin to the microscopic thread textures of fabrics—make it indispensable for concept artists and commercial photographers. With its web interface moving to full release, it is unrivaled in "generating the beautiful."

2. DALL-E 3 (OpenAI) Thanks to its flawless integration with ChatGPT, it is the most "user-friendly" image generator in the world. While other models require "prompt engineering," DALL-E 3 perfectly grasps natural language and your intent. It places the spatial relationships of objects within the image with millimeter accuracy. Although its guardrails are strict, its structure preventing copyright infringement and its 100% prompt-adherent results make it ideal for rapid storytelling.

3. Imagen 3 (Google DeepMind) Fed by Google's massive datasets, this flagship model shatters old AI taboos regarding photorealism and human anatomy (faces, hands). Imagen 3 leaves no "AI smoothness" behind, simulating lens distortions, film grain, and natural depth of field like a physics engine. It offers indistinguishable-from-reality outputs, especially for advertising and stock photography.

4. Grok 2 Image / xAI Developed by Elon Musk's xAI team and integrated into the X platform, it is the market's "boundary-pushing" generator. Powered by Black Forest Labs' Flux architecture, Grok keeps copyright and political correctness filters flexible. It provides unparalleled freedom for topical humor (memes) and fast social media content.

5. Meta Emu / Imagine Embedded in the heart of the WhatsApp, Instagram, and Facebook ecosystem, it is an ultra-fast image generation engine. Focused on social media communication, the model is used for creating avatars, making stickers, and generating backgrounds for stories. With the LLaMA infrastructure, it understands the instant chat context and delivers visuals with zero latency (real-time).

B. Models Focused on Design, Typography, and Corporate Workflows

6. Ideogram v3 It made its mark on the industry as the first model to solve the problem of placing "text" into images. It is unrivaled in poster designs, t-shirt prints, neon signs, and typographic visuals. It blends text perfectly with the chosen art style without making spelling errors. It is the number one tool for graphic designers to create references.

7. Recraft v3 It is the only professional AI capable of directly generating infinitely scalable "Vectors (SVG)." It is a savior for designers in creating logo designs, icon sets, and brand identities. It has a consistency engine that memorizes brands' color palettes (hex codes) and style guides, ensuring that generated visuals come out with the exact same brand language.

8. Adobe Firefly Image 3 It is the corporate hero that can be safely used in commercial projects, as it is trained exclusively on Adobe Stock, openly licensed content, and public domain data. Embedded in the heart of Photoshop, Firefly offers a professional workflow with its Generative Fill feature for pixel-by-pixel image editing and background replacement.

9. Leonardo.ai Phoenix A massive studio designed for game developers and concept artists. Its proprietary model "Phoenix" offers tools like ControlNet, Image-to-Image, pose copying, and instant 3D texture generation in a single interface. It allows you to fine-tune by uploading your own dataset.

10. Canva Magic Media It stands out with its AI integration targeting audiences without design skills. It allows you to instantly place the illustration you need on the page while designing a social media post or presentation. It produces results that automatically adapt to the color palette and overall template of the design.

11. Figma Magic Design Equipped with features directly for UI/UX designers. This model, which can generate a full-screen application interface from text, provides an editable (layered) design with concept visuals, icons, and consistent typography when you type "a modern e-commerce homepage."

C. Open Source Revolutionaries

12. Flux.1 (Black Forest Labs) It is the most popular open-source model of 2026, shattering the hegemony of Midjourney and DALL-E. With its 12-billion-parameter structure, it offers incredible photorealism and flawless typography understanding. This model, which people can run on their own computers, has brought industrial quality to open source.

13. Stable Diffusion 3.5 / 4.0 (Stability AI) SD3.5 and the new 4.0 architecture (MMDiT) are a giant leap in understanding complex prompts. Its greatest strength is having the world's largest fine-tuning and LoRA ecosystem. You can teach the model any face or art style you want.

14. SDXL Turbo / SD3 Turbo The architecture that makes image generation "real-time". Thanks to the ADD technique, it allows the image to appear on the screen at a tenth of a second speed before you even finish the word. It is unrivaled in sessions requiring instant feedback.

15. PixArt-Sigma It is an efficiency marvel capable of producing images in 4K resolution with only 600M parameters. It is a hardware-friendly open-source model designed for individual users with very low VRAM (8GB and below) to produce high-quality concept art.

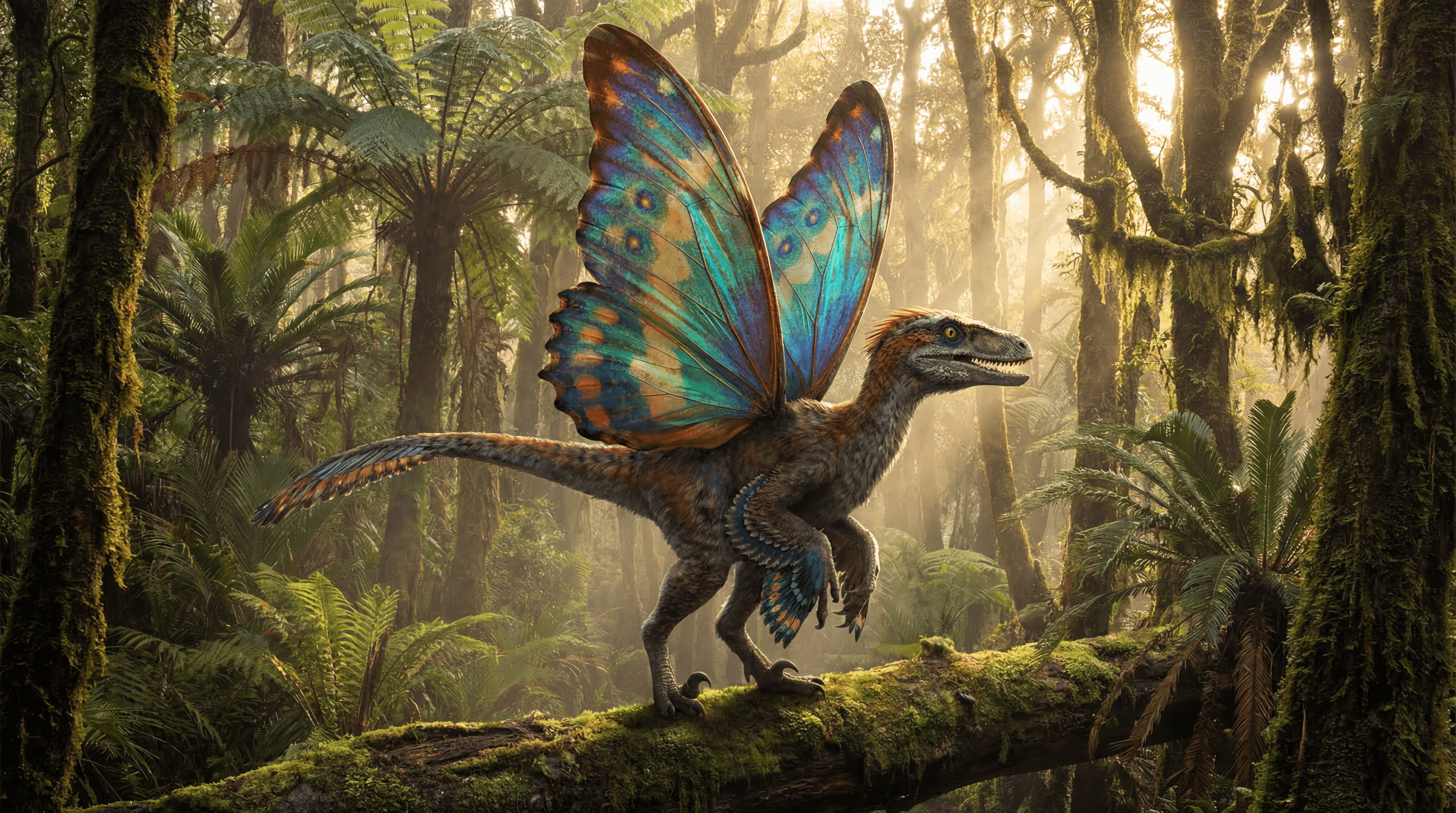

16. AuraFlow A completely open-source Flow Matching model with massive capacity (6.8 billion parameters). It shows very high prompt accuracy in high-quality text generation, detailed fantasy environments, and anime-style productions.

17. Würstchen v3 / Cascade An innovative architecture that traps data in an extremely small "latent space" (42x compression). The compression ratio makes the model incredibly cheap to train and run. It is a logical engine for startups that care about the cost/performance ratio.

18. Playground v3 Nourished by open-source culture, Playground's proprietary model is strong in capturing the vibrancy of colors and modern "digital art" aesthetics. It offers professional tools like image modification and masking through a very simple interface.

D. Corporate Market, Alternative Powers, and Asian Giant Models

19. Amazon Titan Image Generator v2 An e-commerce and corporate-scale model designed for giant companies using the AWS cloud system. It can place product photos into different backgrounds in seconds. It offers copyright guarantees and its violence/toxicity filters are well above industry standards.

20. Kolors (Kuaishou) Gifted to the open-source world by Kuaishou, it is one of Asia's most powerful image generators. Using the ChatGLM language model, it understands Chinese commands with immense depth. It can flawlessly produce aesthetic details unique to Asian culture.

21. HunyuanDiT (Tencent) Using the Diffusion Transformer architecture, this open-source model performs exceptionally well in Chinese calligraphy and complex architectural structures requiring fine details. Integrated into the Tencent ecosystem, it is a standard in the Chinese gaming industry.

22. Ernie ViLG (Baidu) Developed by "China's Google," Baidu, the model targets the local market and provides results with the highest cultural sensitivity in commands related to historical Chinese figures or specific Asian mythology.

23. Kandinsky 3.1 / 4.0 A powerful open-source model emerging from the laboratories of the Russian tech giant Sberbank. It has a unique talent in artistic styles like "abstract art," "oil painting," and "surrealism." It can step outside the typical AI look and produce more organic visuals.

24. DeepFloyd IF Operating with a Pixel-space diffusion system instead of latent, the model has achieved success far ahead of its time regarding the accuracy of words written into the image. It is critical for signage and font design projects.

25. Juggernaut (RunDiffusion) An independent giant created specifically for cinematic photography. It is so specialized in 85mm lens effects, studio lighting, and pores in skin texture in portrait photography that it offers the aesthetic of a Vogue or National Geographic cover.

PART 2: VIDEO GENERATION MODELS (Text/Image-to-Video)

A. Feature-Length, Physics Rules, and Cinematic Producers

26. Sora (OpenAI) The pioneer that introduced the concept of a "world simulator" to the industry, fundamentally changing video generation. Capable of exceeding 60 seconds, it is the industry's number one reference point for object permanence. It flawlessly simulates physics rules, reflections in glass, and complex camera pans.

27. Veo (Google DeepMind) Google's most advanced AI for producing cinematic 1080p video, standing as a direct rival to Sora. Trained integrally with the YouTube infrastructure, it has an immense ability to understand film grammar, drone shots, and editing techniques.

28. Gen-3 Alpha (Runway) The industry-standard video AI used by professional editors and post-production teams. It is a professional editing assistant offering users the ability to control "which object will move in which direction" with pixel precision using motion brushes.

29. Kling Video (Kuaishou) It pushes the limits with 1080p resolution, 60 frames per second fluidity, and continuous long video generation capacity up to 2 minutes. It is famous for processing complex human movements without deformation and has become the number one engine for AI series in the Asian market.

30. Luma Dream Machine A popular model known for its "accessibility," capable of generating physically consistent video in seconds. The keyframe feature allows you to set the start and end images of the video, and it fills the transition between the two images with flawless 3D interpolation.

B. Next-Generation "Real-Time" and Synchronized Audio-Video Models

31. LTX 2.3 (Lightricks) A 22-billion-parameter open-source monster. It revolutionized the field by directly producing "local 4K video with synchronized audio" in a single pass. It instantly synthesizes audio along with the image (e.g., the sound of breaking glass).

32. Helios (ByteDance / Canva / PKU) A revolutionary architecture capable of generating a full 60-second video at "real-time" speed on a single consumer-grade GPU. The moment you enter the command, the video instantly starts playing and generating on the screen.

33. Pika 2.0 (Pika Labs) Stands out with its animation, lip-sync, and post-added sound effect capabilities. It can flawlessly move a character's mouth according to a text you write and allows changing the movement of a specific region of the video.

34. Lumiere (Google) Calculates all frames of the video simultaneously from start to finish using a "Space-Time U-Net." This method reduces logic errors and background flickering between the beginning and end of the video to almost zero.

35. Haiper 2.0 Focuses on producing 2 to 4-second "high-action" clips. In fast scenes like jumping or spilling liquids, it perfectly simulates blur and movement, providing great transitions for commercial films.

C. Open Source and Workflow Models

36. CogVideoX (Zhipu AI) A 3D VAE-based model that democratizes open-source video generation. Thanks to its very low VRAM consumption, it can run even on standard gaming computers. It attracts attention with its high consistency in converting text to video.

37. Mochi 1 (Genmo) A high-fidelity open-source video model using an asymmetric diffusion architecture. It challenges closed-source giants in areas where physics engines struggle, such as fluid dynamics (water, smoke) and cloth simulations.

38. Stable Video Diffusion - SVD (Stability AI) The most stable model in the industry for "animating an existing static image (Image-to-Video)" by the king of open-source image models, Stability AI. It animates cinematically by calculating camera pan and tilt values.

39. Vidu (ShengShu Technology) A revolutionary model with a "Multi-Camera" feature. It can simultaneously create the same scene, character, and event from different camera angles (wide shot and over-the-shoulder close-up).

40. Morph Studio A "node-based" video production workflow platform. It acts as a "film set" for AI by combining various APIs like Stability, Runway, and Pika into a single fluid production pipeline.

41. Leonardo Motion An integrated module that turns static visuals into smooth animations in "Cinemagraph" quality. It is perfect for producing flawless "looping" short videos with minimal deformation using "Motion" brushes.

42. Open-Sora A global community project aiming to copy Sora's behind-closed-doors technology into open source. It does not belong to a single company and is the biggest symbol of resistance against AI monopolization in 2026.

PART 3: COMPARATIVE ANALYSIS AND SYNTHESIS

1. Cost and Performance Curve

The secret of large agencies is to use limitless local open-source models (Flux.1, CogVideoX) during the brainstorming phase, and closed models (Midjourney, Veo) during the final render phase. On-premise solutions drive API costs close to zero in the long run.

2. Ease of Use vs. Pixel Control

While DALL-E 3 or Canva is ideal for fast results; those who want fine pixel control (direction, motion brushes, lighting) should use ComfyUI, Leonardo, and Runway Motion Brush. Ease of use operates like a black box, whereas pixel control offers artistic authority.

3. Censorship, Copyright, and Corporate Security

For major brands, Adobe Firefly and Amazon Titan offer a "zero copyright risk" guarantee. Independent artists who want to bypass censorship walls and produce freely should prefer Grok 2, Flux, and open-source video models.

CONCLUSION

In 2026, the 40+ AI models listed in this guide have evolved from isolated software into "Agentic Workflows." The future lies not in having the best model, but in establishing the editing architecture (workflows) that allows these models to communicate with each other most fluently.